Popular LLMs training data, what do they use ?

Popular LLMs training data seems to be universal and generic. This is why such models are so popular, they more or less know an answer to everything. But how do they come about to those answers ? What is the source of that ? Where do they get the data from ? Let`s search the web the old fashioned way and find out.

Popular LLMs training data types

The training data for these models come from all around the world. We humans are the ones that provide it. It is our work that is pushed into a model. LLMs training data reflects carefully curated huge datasets designed to provide high quality, diversity and relevance. Crucial is the quality and cleanliness of the data.

0. Corpus (corpora )

A corpus (plural corpora) is a structured collection of language data. Written texts or transcriptions of spoken language, compiled in digital form. Corpora serve as fundamental datasets for linguistic research, natural language processing (NLP), and LLMs training data.

1. Web Data

Large scale web crawling systems such as Common Crawl* provides tons of raw text data from publicly available sources including websites, blogs, forums, articles and many many more. This source is virtually unlimited and diverse. However requires considerable clean up and curation to remove 'trash data’ like spam, duplicates, low-quality content or any other non-relevant information. Projects like RefinedWeb process huge amount ( trillions ) of tokens using complex filtering to extract high quality text ( mainly english ).

*Common Crawl is a nonprofit founded in 2007. Crawls the web and provides an open repository of web data accessible for free.

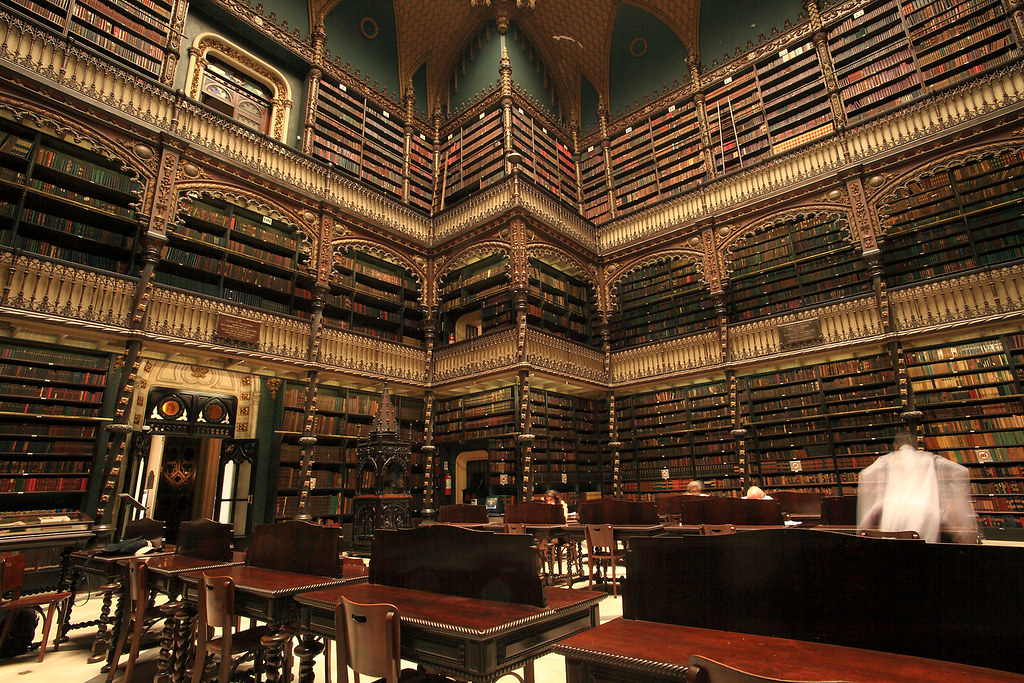

2. Books

Digitized books including multiple different genres like fiction, non-fiction, encyclopedias, and academic literature. Perfect for providing LLMs training data of high-quality and structured source. Book datasets offer professionally edited language and balanced stylistic diversity that helps models learn coherent and complex written expression. Access often comes from public domain books or licensed corpora.

3. Scientific literature, publications, papers, patents

Datasets made of scientific articles from repositories like core , arXiv, pubmed or patent offices provide proper technical language and factual knowledge. Peer revision of such papers provide higher quality training data for llms. Such data enhances models understanding of scientific terminology, reasoning, and formal writing styles. Improvs performance on technical and academic tasks. The LLMs training data can utilize tokens encoded from scientific and technical language. It can be visible when we ask a question to the model in a „slang” way versus a „scientific, technical” way.

4. Conversational

Dialogue data scraped from online social networks or forums such as Reddit, any „stack” board, news websites enriches models abilities in „chatting” with us providing a more „human like” interactive contexts. Remember this is all based on probability. This data captures natural conversational flow, question and answer, request and response dynamics, and informal language, making models more fluent in dialogue and responsive to user input.

5. Code Data

Large collections of source code from public repositories github, bitbucket and others, train models to understand programming languages, coding patterns, and software development workflows. Integrating code helps LLMs assist in code generation, debugging, and explanation across various programming languages. Here You can think about why would You need popular LLMs training data consisting of code where most people do not use it. That is exactly the case. Every model could be trained on „only” some genre of data, it will make them faster and cheaper. YAGNI rule – You ain`t gonna need it anyway.

6. Specialized math training data

Datasets like proof pile, Numdan, zbMATH and others are curated corpora focus on mathematical proofs, logical reasoning, and complex calculation tasks. They improve the model’s capacity for precise reasoning, mathematical language comprehension, and symbolic manipulation.

Curation Process

- Filtering involves language identification to exclude non-target languages.

- Line-wise and document-wise filtering remove low-quality, spammy, or irrelevant content.

- Deduplication uses fuzzy and/or exact matchers to remove repeated content, preventing model overfitting to duplicates.

- For conversations, techniques ensure temporally consistent and causal progression of dialogue to improve context understanding.

- Code and math datasets are processed similarly to maintain quality and relevance.

Who Provides the Data

- Many datasets are open-source or publicly licensed collections maintained by research groups or organizations such as EleutherAI (The Pile), Common Crawl, and academic venues.

- Specialized curated datasets like RefinedWeb are constructed by AI research institutes such as the Technology Innovation Institute (TII), creators of Falcon.

- Some datasets, such as certain book corpora or scientific archives, come from public institutions or repositories that make research and literature openly accessible.

Why This Matters

This meticulous preparation of data results in a diverse, clean, and comprehensive training corpus. It enables LLMs to have wide knowledge, detailed understanding, support multiple languages (if applicable) and proficiency between domains from general language to coding and scientific reasoning. This quality data backbone is key to making models like Falcon, LLaMA, and OPT powerful and flexible for diverse tasks. The question is do we want it broad knowledge or should we specialize to narrow down the amount of input data.

Summary

Models train on a huge amount of datasets, vast mixture of web text, books and scientific papers, conversations, code repositories, specialized math datasets. All of it filtered, cleaned and balanced by research teams to optimize learning efficiency, accuracy, and safety.

Popular models and their training data

This is the general set for the data that is fed to the LLMs.

| Model Name | Size (GB) | Parameters (B) | Tokens (Context Window) | Training Data Description |

|---|---|---|---|---|

| Falcon 7B | ~13-14 GB | 7 | 2,048 | Trained on RefinedWeb (5T+ tokens filtered web data), curated books, scientific papers, patents, conversations, code, and math datasets |

| GPT4All / LLaMA 7B | ~14 GB | 7 | 2,048 | Mixed public web text, books, and dialogue datasets, fine-tuned for chat and instruction tasks |

| MPT 7B | ~13 GB | 7 | 4,096 | Large-scale web data including The Pile; optimized for longer context windows |

| Falcon 40B (quant.) | ~20+ GB (quant.) | 40 | 2,048 | Scaled Falcon data with high-quality curated mixture; requires quantization and offloading |

| LLaMA 13B (quant.) | ~26 GB (quant.) | 13 | 2,048 | Multilingual web crawl, books, user-generated text; quantized for local execution |

| GPT-NeoX 20B (quant) | ~40-45 GB (quant) | 20 | 2,048 | Large web and code datasets; needs quantization and offloading |

| StableLM 13B | ~26 GB | 13 | 2,048 | Open source datasets including web text and curated sources |

| SS OPT 120B | ~55-60 GB (est.) | 117* | 128,000** | Trained mostly on English text focused on STEM, coding, general knowledge; uses mixture-of-experts architecture; extremely large context window |

| SS OPT 20B | ~10-12 GB (est.) | 21* | 128,000** | Similar domain focus as 120B version with emphasis on STEM, coding, tool use, reasoning; designed for edge devices with 16 GB memory |